Unveiling the Top 5 Unsupervised Machine Learning Algorithms in Data Science

Anubhav Yadav

Student at SRM University || Aspiring Data Scientist || "Top 98" AI for Impact APAC Hackathon 2024 by Google Cloud???? || Data Analyst || Machine Learning || SQL || Python || GenAI || Power BI || Flask

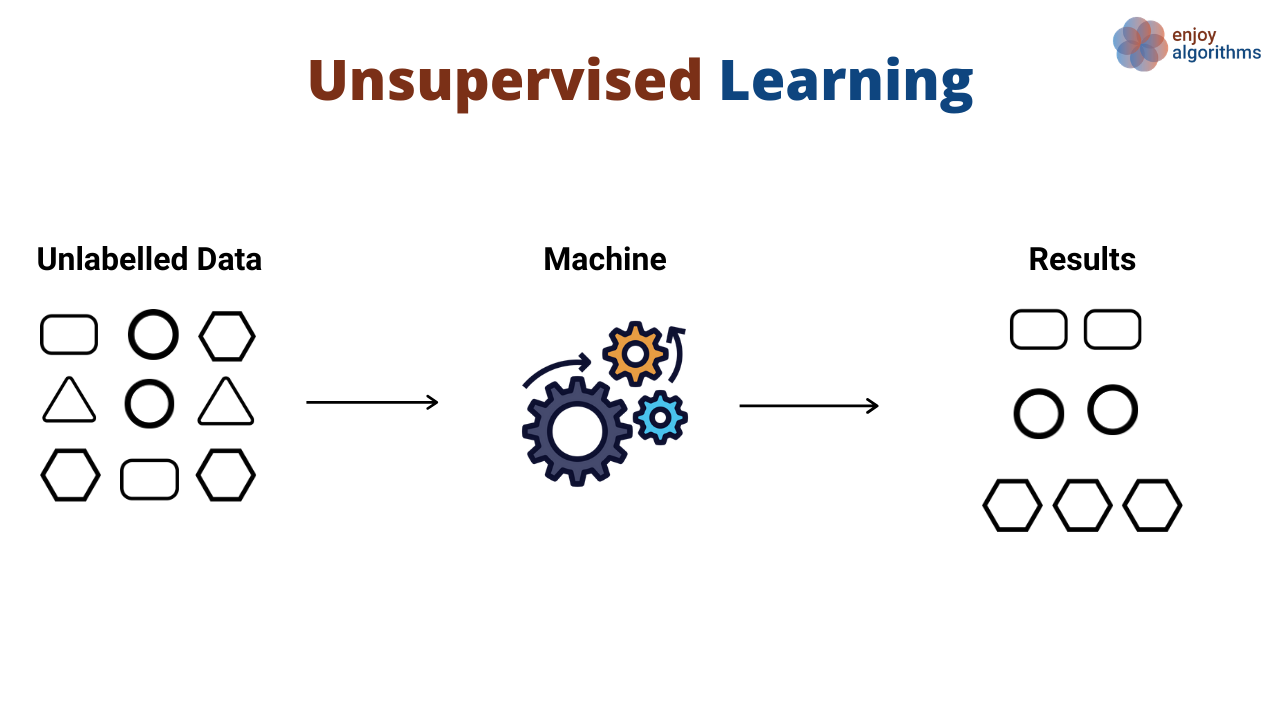

In the vast landscape of data science, unsupervised learning stands as a pillar of exploration, where algorithms uncover hidden patterns and structures within data without explicit guidance. Today, let's embark on a journey to discover the top five unsupervised machine learning algorithms, unraveling their complexities into simple, digestible insights.

1. K-Means Clustering:

Grouping data with centroids – K-Means Clustering partitions data into k clusters by iteratively assigning data points to the nearest centroid and updating centroids based on cluster means. With its simplicity and efficiency, K-Means is a versatile algorithm used for clustering tasks in various domains.

Read More: - https://en.wikipedia.org/wiki/K-means_clustering

2. Hierarchical Clustering:

Tree of similarities – Hierarchical Clustering organizes data into a hierarchy of clusters, forming a dendrogram that illustrates the relationships between data points. By iteratively merging or splitting clusters based on their similarities, hierarchical clustering offers insights into data structures and relationships.

3. Principal Component Analysis (PCA):

Dimensionality reduction with eigenvalues – PCA transforms high-dimensional data into a lower-dimensional space while preserving as much variance as possible. By identifying orthogonal components that capture the most significant variability in the data, PCA aids in visualization, feature selection, and noise reduction.

领英推荐

4. t-Distributed Stochastic Neighbor Embedding (t-SNE):

Visualizing high-dimensional data – t-SNE reduces the dimensionality of data while preserving local structure, making it ideal for visualizing high-dimensional datasets in two or three dimensions. By capturing local similarities between data points, t-SNE reveals clusters and patterns that may be obscured in high-dimensional space.

5. Gaussian Mixture Models (GMM):

Modeling data with probabilistic components – GMM represents data as a mixture of multiple Gaussian distributions, allowing for flexible modeling of complex data distributions. By estimating the parameters of these distributions, GMM identifies clusters and their underlying probabilities, offering insights into data structures.

Conclusion:

In summary, these top five unsupervised machine learning algorithms offer a diverse toolkit for uncovering hidden patterns and structures within data. From the simplicity of K-Means Clustering to the visual richness of t-SNE and the probabilistic modeling of GMM, each algorithm brings its unique strengths to the table. By understanding their principles and applications, data scientists can unlock the full potential of unsupervised learning in data exploration and analysis.