Essential Benchmarks and Metrics for Responsible AI

The rapid advancement of Large Language Models (LLMs), such as GPT, LLaMA, and Gemini, has profoundly reshaped the landscape of artificial intelligence, expanding possibilities across numerous sectors. However, with such remarkable power comes great responsibility. Ensuring these models are reliable, ethical, and truly beneficial requires comprehensive benchmarks and precise evaluation metrics.

Why We Need Benchmarks and Metrics

Consider this analogy: judging an athlete’s capability solely based on appearance would yield superficial insights. True assessment involves performance across specific events, consistency, and adherence to established rules. Similarly, assessing LLMs must transcend casual observation, requiring rigorous, standardized evaluations to ensure their performance aligns with ethical standards and real-world reliability.

The Landscape of Modern LLM Benchmarks

Today’s AI assessments reach beyond simple linguistic tasks, probing deeper into the core facets of intelligence and capability:

1. Abstract Reasoning (ARC)

2. Multimodal Understanding (MMMU)

3. Advanced Scientific Reasoning (GPQA)

4. Multitask Knowledge Transfer (MMLU)

5. Code Generation and Logical Reasoning (HumanEval, SWE-Bench, CodeForces)

6. Tool and API Integration (TAU-Bench)

7. Commonsense Reasoning and NLP Proficiency (SuperGLUE, HelloSwag)

8. Mathematical Reasoning (MATH Dataset, AIME 2025)

Beyond Benchmarks: Crucial Evaluation Metrics

Benchmarks create scenarios for evaluation, but metrics translate model performance into quantifiable insights:

1. Accuracy

2. Lexical Similarity (BLEU, ROUGE, METEOR)

3. Relevance and Informativeness (BERTScore, MoveScore)

4. Bias and Fairness Metrics

5. Efficiency Metrics

6. LLM-as-a-Judge

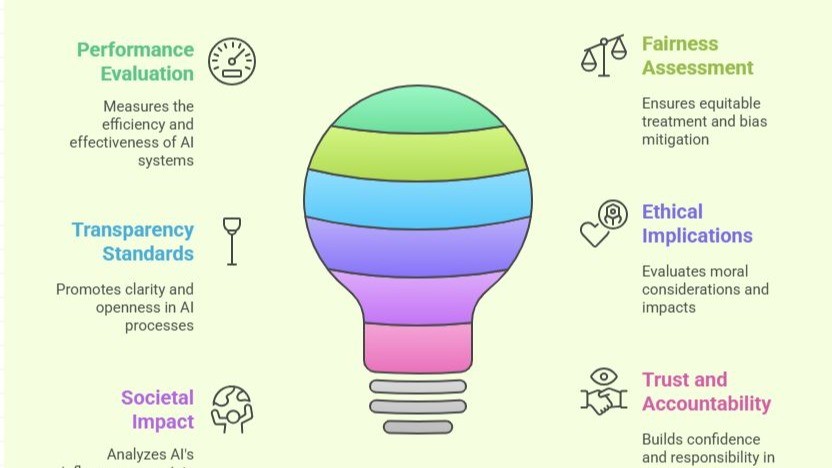

The Significance of Robust Evaluations

These benchmarks and metrics are not merely academic exercises. They are crucial to:

Looking Forward: Future Directions in LLM Evaluation

As LLM technology rapidly evolves, evaluation methods must adapt and refine. Key areas for future emphasis include:

By embracing rigorous evaluation methodologies, we can navigate the complexities of Large Language Models effectively, transforming them from powerful tools into ethical, trustworthy partners in innovation and societal advancement.